Recently, OpenAI, the maker of ChatGPT, reached an agreement with the US Department of Defence for classified military use. This got your reporter thinking: how is AI used in warfare right now?

Next time you visit your therapist, they might ask a simple question: how is your relationship with your... AI model?

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

And if you say: “complicated”, you might have a point.

Let’s start with cybersecurity. On the digital front, the Iran war triggered a surge in geopolitical cyberattacks, which are now deployed right alongside physical weapons.

And with the rise of AI deepfakes and highly personalised phishing emails, experts warn that you can no longer rely on what you see and hear.

But what about the strategy simulation? Before reaching the battlefield, AI is tested in war games.

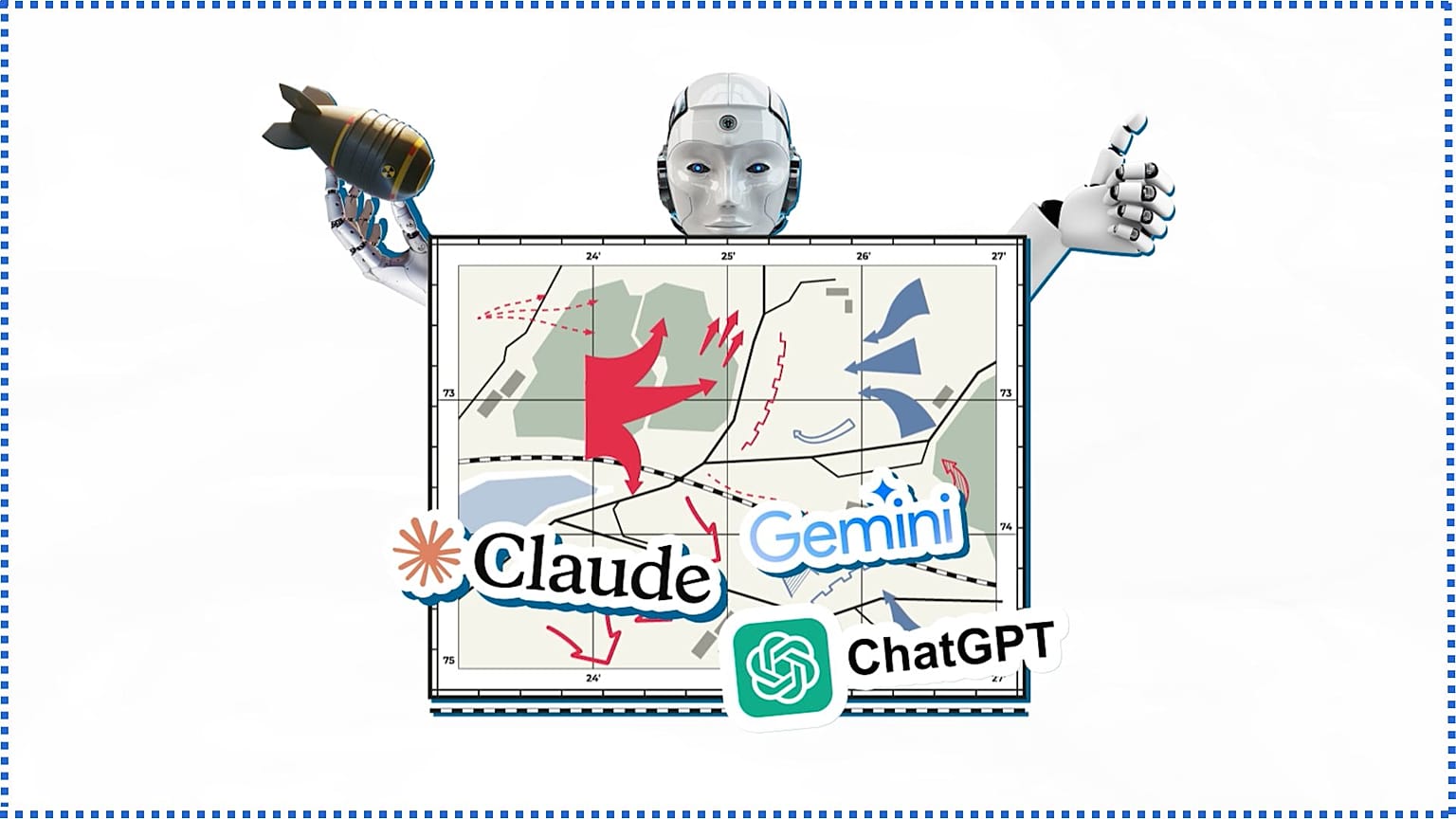

In a recent study, models including ChatGPT, Claude, and Gemini were placed into simulated crises.

And the results were alarming, as in every game, at least one AI escalated the conflict by threatening to use nuclear weapons.

Finally, during recent airstrikes on Iran, the US military relied on Anthropic's Claude to identify targets.

But after Anthropic refused the Pentagon unrestricted access over ethical concerns, OpenAI immediately stepped in to take the contract.

OpenAI insists the agreement strictly prohibits domestic mass surveillance and requires human oversight for weapons.

Defending the deal, OpenAI's Sam Altman posted: "We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place."

So, it seems the deal would result in either greater commitment to humanity or more complications and dangerous situations.

And as I told you, it is “complicated”. Right, ChatGPT?

Watch the Euronews video in the player above for the full story.