Lensa's AI-generated "magic avatars" have been a runaway hit on social media. Why are artists warning against them?

If you’ve been anywhere near social media lately, you may have noticed some of your friends posting otherworldly digitally-animated portraits of themselves.

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

Even celebrities like Chance the Rapper and Megan Fox have jumped on the bandwagon.

The portraits look like they were made by digital artists, and in a way, they sort of were. But no individual artist is getting paid for them, or even getting credit.

That’s because these works weren’t created by a human. They were created by a machine.

The Lensa AI app by Prisma Labs uses artificial intelligence to transform your selfies into customised portraits, allowing users to be whoever they choose to be.

But while it’s become a runaway hit on social media, the app has drawn the ire of digital artists, who claim the works it generates are based on stolen art.

New to the story? Here’s what you need to know.

How does Lensa work for users?

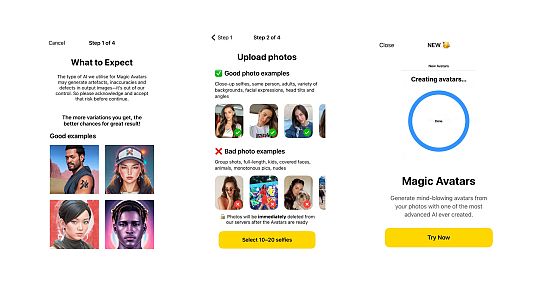

Creating these digital portraits using Lensa AI is simple and relatively inexpensive, two facts that have no doubt contributed to the app’s meteoric rise in recent weeks.

According to Statista, Lensa AI has been downloaded more than 5 million times since November, when the “magic avatar” feature was introduced.

A subscription normally costs about 100 euros per year, but users can benefit from a 7-day free trial. Accessing the “magic avatar” function, which generates 50 fantastical portraits, costs an additional 4 euros for everyone.

To get access to their portraits, users need to upload 10-20 selfies of themselves to the app.

Lensa prompts them to choose a variety of different angles and facial expressions to get the best results, and warns them that there may be “inaccuracies or defects” in the output images.

Those defects sometimes present as extra arms and legs, or heads that turn at unnatural angles.

After the app has time to run a user’s photos through its AI model (about 10 minutes), it spits out a variety of avatars. Users can then save them onto their devices and share them freely on any and all social media platforms.

As far as data protection goes, Prisma Labs wrote in a lengthy FAQ that users’ photos are deleted from its servers immediately after the avatars are generated.

The avatars themselves are stored “as long as it takes to provide our users with the service” and Prisma Labs says all of the avatars belong to the user who created them.

Why are artists worried about Lensa?

As millions of users around the world fell in love with their magic avatars, a wave of anxiety began sweeping through artist communities online.

Not only were these AI-generated portraits taking away commission opportunities for digital artists, but some of those artists’ artworks were being used to train the AI model that generated them… often without their permission.

On Instagram, digital artist Meg Rae posted a warning that was widely shared across the app:

“Do not use the Lensa app’s ‘Magic Avatar’ generator,” she wrote. “It uses Stable Diffusion, an AI art model, to sample artwork from artists that never consented to their work being used. This is art theft”.

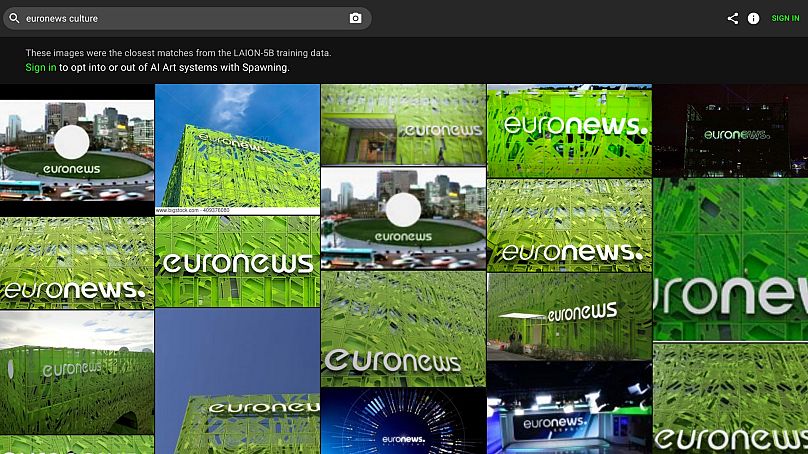

It’s true that Lensa uses a copy of the open-source neural network model Stable Diffusion to train its AI. The model taps into a pool of billions of images from all corners of the internet, which are compiled into a dataset called LAION-5B.

Stable Diffusion then uses these images to learn techniques that it applies to generate new works, which Lensa claims “are not replicas of any particular artist’s artwork”.

Copyright law regarding these datasets is murky. LAION’s website says that because the datasets only contain URLs of images, they serve as indexes to the internet, which do not violate copyright law.

The CEO of Stability AI, Stable Diffusion’s creator, also defended the AI model and its machine learning process.

Emad Mostaque told The Verge that scraping public images from the internet - even copyrighted ones - was legal in the US and the UK and that the open-source nature of Stable Diffusion meant that any copyright infringement was the end-user’s responsibility.

But even if AI art can clear legal hurdles, the ethics surrounding these image generators are far from straightforward.

What can artists do to take back control?

The virality of Lensa AI’s magic avatars sparked a conversation among artists about how they can regain control of their work in the age of machine learning.

One of the tools available to them is the search engine haveibeentrained.com, which was developed by Berlin-based artists Mat Dryhurst and Holly Herndon.

It allows artists to see if their work is included in the LAION datasets used to train AI and then to opt-out if they choose.

Dryhurst told Euronews Culture via email that Stability AI and LAION have agreed to honour any opt-out request made by artists.

The European Union’s data protection act (GDPR) also allows EU citizens to contact LAION directly to request the removal of any identifying information, like their name or likeness, from the dataset.

But Dryhurst said that beyond the technical opt-out procedure, there needs to be a wider conversation about consent and collaboration in the art world.

“A new protocol of consent, which is our ultimate interest, is easier to imagine executed technically than it is socially, but the social dimension is probably the most important,” Dryhurst told Euronews Culture. “We hope that this new suite of tools will lead to more attribution and profit sharing between artists, and a greater discursive shift towards acknowledging how interdependent creative worlds are”.

Is AI inherently anti-artist?

“I am not against artificial intelligence, I want to make that clear,” said Spanish artist Amaya Díaz. “If it’s a tool for us to use, and if people learn to value what we put in our work, I think it will be fine.”

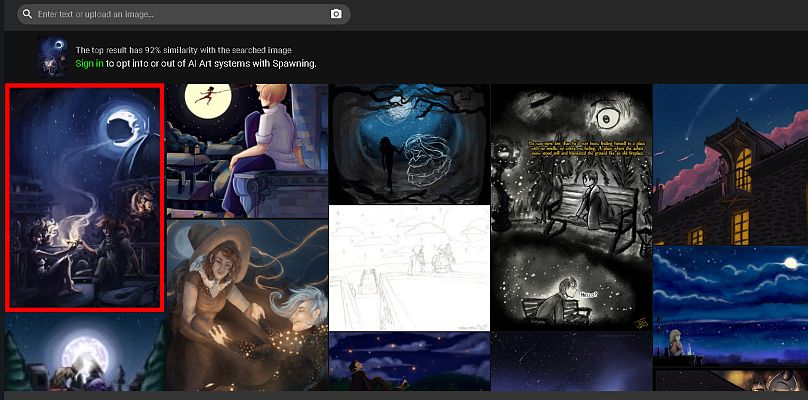

Díaz, a Spanish comic artist and illustrator who goes by Stelladia, said the Lensa frenzy inspired them to search for their artwork on haveibeentrained.com.

They found some of their very old artworks in the dataset, which they had posted on the platform DeviantArt while a student.

“I wasn’t really surprised,” Díaz told Euronews Culture. “We as artists are very used to our work being used without consent. I think the reason this is becoming such a big deal is because we are very exhausted.”

Being an artist is hard, often thankless work, Díaz said. It takes years to hone their skills, getting paid fairly and on time is a constant struggle and there’s a lot of self-promotion involved that goes against many artists’ introverted natures.

“When you see that your work is being used for something that is going to maybe put you out of the market, it’s really exhausting,” they said. “You just feel disempowered and you just want to go to bed and stop trying.”

Dryhurst said he believes the recent uproar around AI-generated art is likely due to a growing frustration with the way society has devalued art over the past century.

“I think we are still in the dress rehearsal period (of AI art), and so there is no reason to panic just yet,” he said. “I think it is important for artists to assert control over their data, and I think that will create the prerequisite conditions for all kinds of new and exciting configurations to emerge”.

For Díaz, the hope is that as artificial intelligence evolves, so will human mindsets.

“Artificial intelligence is just doing a very empty mix of already existing stuff,” they said. “If you look closely at it, you will see that there is no consciousness behind it. I really hope people can understand what we (artists) do when we are creating something [...] and hopefully people will look forward to human art”.