It’s all change at Twitter; the company has a new boss, new rules on posting media of private individuals, and now a new reporting process.

It’s all change at Twitter. In the last couple of weeks Jack Dorsey stood down as CEO, he was replaced by Parag Agrawal, and the company implemented a new ban on posting media of private individuals.

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

Now, it is revamping its tweet reporting process, amid widespread complaints of the current system being abused.

In a blog post on Tuesday, the company revealed it was testing an overhauled reporting process, brought about because of user research and an understanding that "today’s reporting process wasn’t making enough people feel safe or heard".

The move comes following numerous incidents of activists, disinformation researchers, and others having their accounts suspended following abuse of the reporting system by malicious accounts mass reporting them.

Twitter user Chad Loder, an anti-fascist activist, documented a series of these incidents in a Twitter thread.

Twitter admitted on Friday that the rollout of its new policy on posting media of private individuals had not been smooth, and had been subject to malicious use.

"We were informed of a significant number of malicious and coordinated reports, and unfortunately our teams made several mistakes," Twitter told AFP.

"We have rectified these errors and are conducting an internal investigation to ensure this policy is used appropriately," the firm added.

Twitter making it ‘easier to report harmful behaviour’

The new process is being tested within a small group in the US, Twitter said in its blog post. It likened the new system to a doctor diagnosing a health problem.

"Say you're in the midst of an emergency medical situation. If you break your leg, the doctor doesn’t say, is your leg broken? They say, where does it hurt? The idea is, first let’s try to find out what’s happening instead of asking you to diagnose the issue," the post states.

What this means in practice is that users will no longer have to pinpoint how behavior on Twitter has violated a specific element of the company’s code of conduct. “Instead it asks them what happened” the blog states.

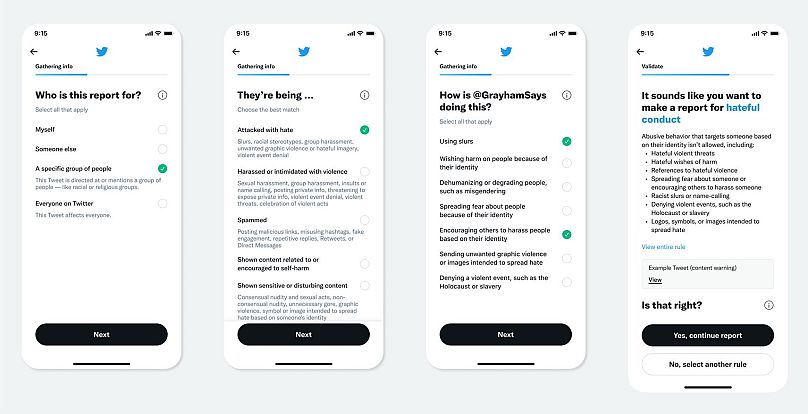

The new process looks like this.

"In moments of urgency, people need to be heard and feel supported. Asking them to open the medical dictionary and saying, ‘point to the one thing that's your problem’ is something people aren't going to do," said Brian Waismeyer, a data scientist on the health user experience team that spearheaded this new process.

"If they're walking in to get help, what they're going to do well is describe what is happening to them in the moment".

The company says people from "marginalised communities" such as women, people of colour, and people from the LGBT community, were all included in the research and development of the new system.

The new reporting system will be refined during the testing phase with the small group in the US, before being rolled out more widely in the new year.