US transport safety authorities are investigating Tesla after a series of high-profile crashes involving self-driving cars and emergency services vehicles.

The investigation into car crashes involving Tesla's "Autopilot" self-driving system deepened this week as American traffic safety authorities sent demands for data on automated vehicles to twelve other carmakers.

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

On Monday, the US National Highway Traffic Safety Administration (NHTSA) sent letters to major manufacturers including Ford, Toyota, Volkswagen and BMW asking for documents relating to vehicles featuring Level Two "Advanced Driver Assistance Systems".

Level Two vehicles are "partially automated" and can control driving systems like steering, braking and acceleration automatically.

The NHTSA's request for data from 12 major car manufacturers comes two weeks after a similar demand for Autopilot crash data from Tesla.

What is the NHTSA asking for?

The safety agency requested the companies provide details of how many Level Two vehicles they had sold in the United States, the software they are running and the total distance covered with automation features switched on.

Manufacturers must also provide the NHTSA with reports on crashes involving automated vehicles, lawsuits involving the technology and customer complaints about their Level Two cars.

The agency requested the data in order to carry out a "comparative analysis" of cars fitted with Level Two driver assistance systems, it said, as it aims to figure out whether crashing into stationary vehicles is just a Tesla problem, or a wider issue with automation in general.

The carmakers contacted by the NHTSA must respond or face a fine of up to €97 million, the agency said.

Tesla under investigation

The investigation into Tesla's Autopilot system was launched in August after a series of accidents involving the company's cars and stationary emergency services vehicles in the US.

Last month, the NHTSA said it had identified 11 crashes since 2018 in which Teslas on Autopilot or Traffic Aware Cruise Control hit vehicles at scenes where first responders were using flashing lights, flares, an illuminated arrow board, or cones warning of hazards.

The probe covered accidents resulting in 17 injuries and one death, before a further fatal crash in New York was added to the agency's investigation earlier this month.

Vehicles with Level Two automation systems like Tesla's are described by the Society of Automotive Engineers as "partially automated". Officially, Level Two systems require the driver to pay full attention, "remain engaged with the driving task and monitor the environment at all times".

Tesla has previously said that Autopilot is a driver-assist system and that drivers must be ready to intervene at all times.

Driven to distraction

However, a report by researchers from the Massachusetts Institute of Technology (MIT) published on Tuesday suggests that drivers using Autopilot may pay less attention to the road than those driving without.

The data revealed that while the Autopilot function - which can control lane changes and maintain a safe separation from other vehicles - was switched on, drivers looked away from the road ahead more often and for longer periods.

"Visual behaviour patterns change before and after Autopilot disengagement. Before disengagement, drivers looked less on road and focused more on non-driving related areas compared to after the transition to manual driving," the researchers wrote.

The study used data from an ongoing MIT study of driver automation which employs cameras tracking Tesla drivers' movements to determine their "glance pattern" - how long they spent looking at the road or elsewhere while driving.

"The higher proportion of off-road glances before disengagement to manual driving were not compensated by longer glances ahead," they added.

The study also found that off-road glances tended to be longer with Autopilot than without, and glances at the Tesla's centre console - home to the vehicle's large infotainment screen - were always up to 0.9 seconds longer while the system was in use.

Musk’s bold claims

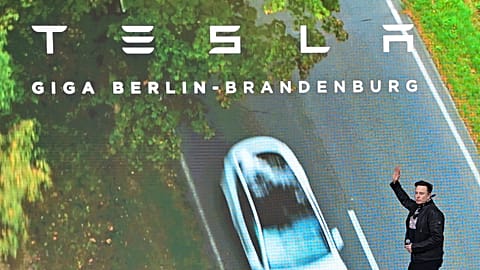

In April, Tesla CEO Elon Musk tweeted about accident data which he claimed showed that Teslas on Autopilot were almost ten times less likely to have an accident than the average vehicle.

The company's figures Musk cited showed that on average, there was one accident for every 4.19 million miles (6.74 million km) driven with Autopilot engaged, and one in every 2.05 million miles (3.29 million km) for Teslas using the company's active safety features like automated braking and blind spot collision warnings.