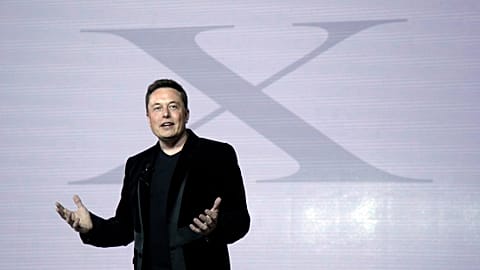

Experts fear Elon Musks’ vision for free speech on Twitter will increase hate speech but others argue it may convince those with extremist views to come around.

This story was originally published on May 1, 2022.

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

Elon Musk has called himself a "free speech absolutist," which has alarmed rights groups and researchers who fear that Twitter could become a platform for extremist views and hate speech.

But the fine line between freedom of speech and ensuring hate speech does not cause radicalisation or violence is a difficult one to balance.

The Tesla and SpaceX CEO has said that following his planned $44 billion (€41.05 billion) purchase of Twitter, he is “against censorship that goes far beyond law” and will reform what he believes to be overzealous moderation of tweets.

"Fundamentally, we have an extremism problem; whether it's QAnon or stop the steal or anti-vaxxers," said Karen Kornbluh, former US ambassador to the Organisation for Economic Co-operation and Development (OECD) and now director of The German Marshall Fund's Digital Innovation and Democracy Initiative.

Referring to former US president Donald Trump who was permanently banned from Twitter for spreading false information and blamed for inciting the January 2021 riot on Capitol Hill, she told Euronews Next "it’s [the problem] not trivial".

"Anything that cuts against doing something to address violent extremism is a problem for democracy and democratic values," Kornbluh said.

"The FBI warns about violent extremism and domestic organising online and Elon Musk seems either not to understand that or not to care about it and he thinks content moderation is a bad thing".

'Not black and white'

But Musk has highlighted that laws will protect the platform against hate speech and calls to violence on the platform.

This may prove enough to ensure all views can be represented on Twitter while preventing the spread of hate speech and calls for violence, argues the CEO of one social media platform that does not ban users or overly moderate content.

"Freedom of speech is really not black and white. This is very messy territory. We're dealing with humanity," Bill Ottman, the CEO and co-founder of Minds told Euronews Next.

The company is a blockchain-based social media platform that uses open-source code. The user can control the type of content they are seeing via an algorithm. But calls for violence and content that is illegal is taken down.

Ottman argues If you ban more extreme content and users, then that user suddenly has no chance at being deradicalised because they go off "into other darker corners of the internet, where it's likely just going to get worse".

"So I try to approach the free speech concept from honesty also from a mental health angle," he said.

Changing minds

The CEO also believes that through discourse happening on social media, people with extreme views can be convinced to come around to rational thinking.

Minds has partnered with the blues musician Darly Davis, a Black man who has deradicalised more than 200 members of the Ku Klux Klan by befriending them.

"For a Black man to go and befriend KKK members, it sounds completely crazy. But actually, that is what can have a real effect. If you just abandon these people, then guess what? They're not going to change," said Ottman.

While Ottman admits it can take years to change the minds of those who are radicalised and sometimes not work at all, it could work on social media.

"If you see a post that is hateful on one side you could get super offended and it ruins your day. But if you look at it through Darryl's lens, you see you could see it as an opportunity for dialogue," he said.

"It doesn't mean that you're necessarily going to get them to change your mind. In fact, what Darrell tells us is that, you know, these conversations take years".

But allowing all views on social media is difficult to balance as Minds has faced criticism for hosting neo-Nazi content.

'Total control'

The other issue is how social media platforms use algorithms, which extract data about users and then use the information to keep them on the site for as long as possible, earning the social media sites more money through advertising.

The algorithms can also control what users are seeing, whether it is far-left or far-right content.

Musk has said that he plans to make them open-source, which would make the platform more transparent in terms of what is being promoted or demoted and if shadow banning, the process of what or who is being blocked is happening.

But another issue is having just one person in control of Twitter.

If Musk’s purchase is approved by shareholders, he would be solely in charge of the platform.

"This is a process of total control over the platform, not just 14 per cent. It's more than that," said Miguel Moreno, a professor and vice dean of infrastructure, sustainability and digitisation at the University of Granada.

"It gives the power to change the technological core of the platform, even with transparency in the use of the algorithm there is a broad margin to apply interest and influence in subtle directions," he told Euronews Next.

However, in reality, Musk may not be able to change the platform that much with regards to moderation.

The Digital Services Act will come into force next year in Europe. The European Commission proposal aims to create a safer digital space and means Musk will have to continue Twitter’s current moderation policies or face fines of up to six per cent of Twitter’s global turnover and even ban the platform from operating in the EU.

"Freedom of speech means different things in different places. What Musk is proposing is freedom without responsibility," said Robin Mansell, Professor of New Media and the Internet at the London School of Economics and Political Science.

"If a platform is not moderated it becomes a platform for political controversy and those who don’t enjoy that will leave,” she told Euronews Next.

"I don't think the platform will change that much due to regulation from different countries and he will be concerned about the bottom line and how much money the platform makes".