Italian scientists are developing humanoid robots that can observe and learn from human behaviour. Those robots can then collaborate with humans across a range of sectors.

Scientists predict that in the future robots will interact with us more and more in daily life.

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

A new research project underway in Italy is developing behavioural models that can be applied to humanoid robots and make them our partners in the workplace.

The AnDy Project’s field of application ranges from customer service, to healthcare, to the industrial sector.

Sensors

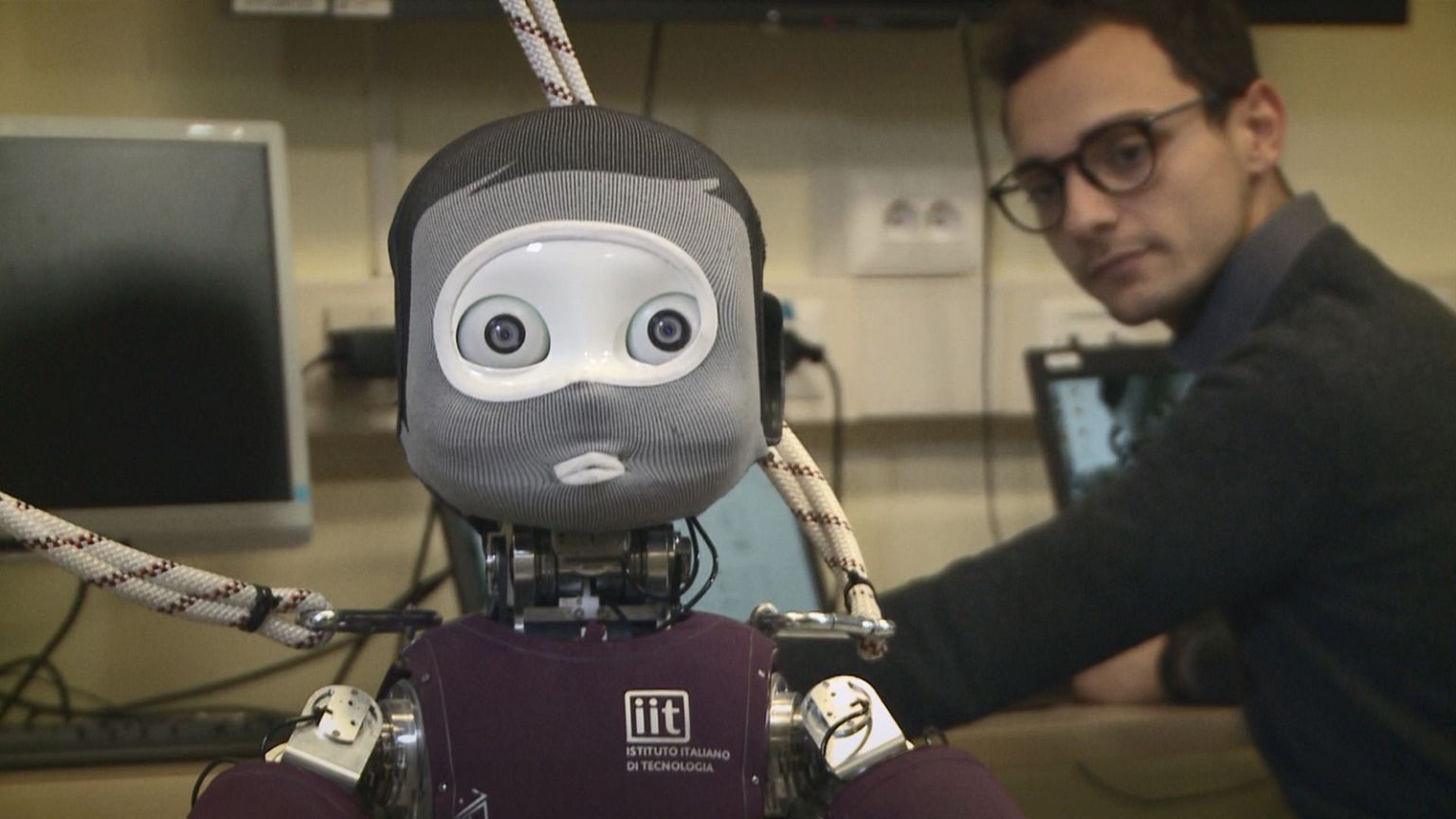

To ensure efficient collaboration, researchers at the Italian Institute of Technologies (IIT) in Genoa are developing hardware and software that allow humanoid machines to evaluate and predict human behaviour.

Daniele Pucci is Principal Investigator at the AnDy Project:

“The robot has several sensors to understand how the human is moving,” he explains. “The presence of the human being is detected, first, by sight. Secondly, during the interaction, the robot is able to sense contact with the human being through his ‘skin’. Then, to allow the robot to be aware of the human’s actions, it needs to be equipped with sensors”.

These sensors are integrated into a special high-tech suit worn by the human subject. The sensors can detect human movements and share that information with the robot in a fraction of a second. The robot can then react almost in real-time.

“An algorithm calculates the intensity of our effort, what we will call it the human dynamics, and this information is transmitted to the robot,” says Claudia Latella, a PhD Fellow at the Institute. “In the future we imagine that the robot will be even able to predict our movements and thus to help us perform common actions”.

iCub

The name of the test robot is iCub. While learning from humans how to move, iCub can be helpful across a range of human activities: manufacturing, health-care and assisted living.

The size and range of movement of each robot will be adapted to its particular function.

“The vision that we have of the final application of this type of robot is the personal assistant, which can be adapted in different ways, from the rehabilitation robot, to the one for assisted living,” says Giorgio Metta, Vice Scientific Director at IIT. “Obviously, the technologies we have developed for this kind of robot can also be used in the industrial sector”.

Learning on the job

Another feature under development is iCub’s ability to record additional information – such as recognising a new object – through vocal instruction, without the direct intervention of a programmer.

“As we can talk with the robot, I can tell him, for example: ‘Look at this, this is a smartphone’, and then to allow the user to add other things the robot needs to know,” Metta explains. “The robot acquires the image and builds a new category by himself, the category of the smartphone”.

Smile!

Researchers working on this European project are also developing facial expressions for the humanoid, to allow it to be more empathetic with potential partners, patients or elderly people.

“We need to integrate the cognitive abilities of the robot — the fact that he recognises the presence of the human being — with his motor skills, the fact that he is able to walk, collaborate and interact with human beings. We expect to reach this goal in the next 10 to 15 years,” says Pucci.

According to researchers, future human–robot collaboration will stick to assisting humans in the workplace. They say robots won’t completely replace their human colleagues.