Can an AI-generated drag show reveal the biases and limitations of the technology? The Zizi Show, a “deepfake drag cabaret”, on display at the V&A Museum in London, hopes to investigate just that.

On-screen, a drag queen bedecked in a sparkling bodysuit drops into the splits.

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

ADVERTISEMENT

The two other AI-generated queens flanking her follow her movement, but as they lower to the ground, their legs suddenly lose form, melting, multiplying, and blend in with the colours and forms of their outfits.

Faced with an unfamiliar situation - doing the splits - artificial intelligence was unable to adapt all three queens’ movements, resulting in a meltdown of the technology.

The Zizi Show is designed to capture these moments of chaos and imperfection, displaying the glitches in artificial intelligence. The exhibit on show at the V&A Museum is part of a larger project, the Zizi Project, which artist, coder, and producer Jake Elwes started working on about five years ago.

“It’s exploring the intersection of drag performance and artificial intelligence”, they tell Euronews Culture.

“Basically the idea is that artificial intelligence has a lot of issues. We’ve been trained on data sets that have a bias towards normativity, so trained on often very particular kinds of people. My idea was, what if we trained AI just on images of otherness, on queerness, on difference?”

How to put together an AI drag show

The generation of the Zizi Show began during lockdown.

Elwes had received funding to work on a project reflecting queer resilience as the Covid-19 pandemic halted everyone’s lives. Me the drag queen was a main collaborator in building the project, helping Elwes initially find 13 drag performers from the London scene. The team then set up video studios in empty venues shut down by the health crisis.

“We filmed each [drag performer] for about three minutes, walking and moving through the space, and that became our data set”, explains Elwes.

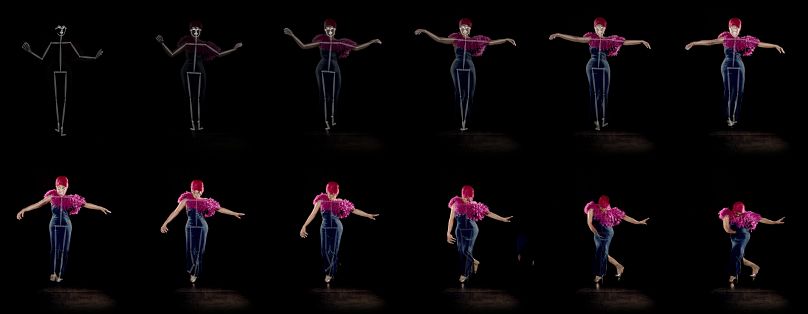

The resulting content provided about 7,000 frames, which were then used to create skeletons following the drag performers’ movements.

When creating the AI drag show, Elwes then used these skeletons unique to the movements of each drag artist to apply them to each other’s AI-generated image. After receiving more funding, the number of drag performers went from 13 to 21.

Some performers ended up moving in ways the others never did when originally recorded. Such as, for example, the three queens who tried to do the splits - only one actually performed that movement during the recording. Another wore a breastplate during the recording, but the garment was unfamiliar to AI technology. As a result, when AI tried to adapt the queen’s movements, the breastplate stayed still but multiplied.

The future of AI: All doom and gloom?

“Drag was a perfect form of gender non-conformity to investigate gender biases, something really accessible and fun,” Elwes tells Euronews Culture. They said they hoped that the Zizi Show can help people understand how AI works, “demystifying” the technology to slow the “fear-mongering”.

“I want to see more people using things like DALL·E or Midjourney with awareness of where the data is coming from and who is training it.”

Despite the risks inherent in the use of the new technology, the misuse of AI for deepfake content, Elwes feels “hopeful” about its future.

“I don’t feel like I am super dystopic”, they say, as long as there continues to be critical engagement with AI, rather than accepting that everything is lost.

Jake Elwes’s exhibit Data • Glitch • Utopia is also on at the Gazelli Art House in London until 8 July.